We love our digital tools.

We just don't trust them.

Valeria Adani, Partner at Projects by IF, distills one of technology's most vexing paradoxes into ten words.

This, one of her favorite quotes, cuts to the heart of our relationship with artificial intelligence as it hurtles into uncharted territory, leaving everyone – users, businesses and regulators alike – scrambling to keep pace.

This regulatory lag carries consequences.

If AI remains untrusted because rules are patchy, adoption will stall.

Businesses face an uncomfortable question: how do you procure and deploy a technology when the rulebook is still being drafted?

Regulators face another: how do you oversee something that reinvents itself monthly?

On 10 March 2026, the Digital Regulation Cooperation Forum (DRCF) convened its second Responsible AI Forum in London. Regulators, technology firms, academics and civil society representatives gathered to wrestle with these questions. What follows are the practical lessons for senior leadership teams and general counsel seeking to navigate this shifting landscape.

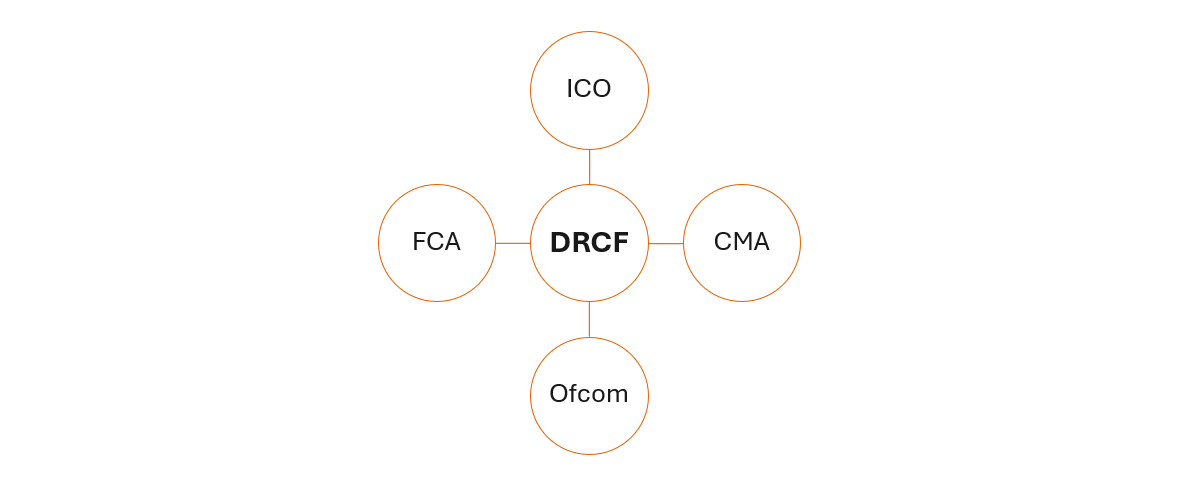

What is the DRCF?

The DRCF launched in 2020 as a voluntary forum, to ensure a greater level of cooperation between regulators, given the unique challenges posed by regulation of online platforms. It brings together four UK regulators with responsibilities for digital oversight: the Competition and Markets Authority; the Financial Conduct Authority; the Information Commissioner's Office; and Ofcom.

Key takeaways

Here are our key takeaways for senior leadership teams and general counsel from the day:

Regulation isn't what it used to be

Keynote speaker, Kenneth Cukier, a journalist at The Economist and co-author of Big Data, argued that we must "reimagine responsibility." Should regulators move from 'do no harm' ("too simple" a standard for AI, he thinks) to a positive duty of care?

Regulation is hard, he concedes, but AI demands fundamental change to our ways of thinking.

The implications for businesses are clear: engage proactively with regulators, perhaps through sandbox initiatives, and be prepared to reshape business models if necessary.

This event confirmed a broader shift we've observed. Many regulators increasingly care a bit less about technical compliance with detailed rules and far more about outcomes. And while businesses wait for prescriptive guidance, standards are filling the gaps. Sheldon Mills, who is currently conducting a consultation on how AI will reshape retail financial services, suggested that "voluntary standards will probably take on a role" too.

Given the pace of AI development, businesses should expect this approach to continue and be ready for regulation, in whatever form it takes, to scrutinise wider societal harms. Getting involved in those bigger conversations now is prudent.

Responsible AI: a quick reminder

The UK hasn't created a single AI regulator. Instead, it's asking existing bodies (the FCA, Ofcom, the ICO and others) to police AI within their own patches.

Five principles currently sit at the heart of this approach:

- safety, security and robustness;

- transparency and explainability;

- fairness;

- accountability and governance; and

- contestability and redress.

Each regulator interprets the principles for its own remit. The government's wager? Allow AI to flourish while guarding human rights, democratic norms and the rule of law.

The hope is that flexibility spurs growth without strangling new technology in red tape.

The danger is that problems slip through the cracks between regulators. At the event, Dame Melanie Dawes, Ofcom's CEO and DRCF chair, acknowledged this and said that regulators want to "build up the muscle fibre of working together."

Whether this arrangement should eventually rest on a statutory footing remains an open question. For now, businesses should recognise that coordination between regulators is still finding its feet, and gaps may emerge as each body applies the framework differently.

Getting the timing right

What should businesses do?

Firstly, don't wait: if you're looking to use AI in your business, but are drumming your fingers and waiting for a clearer or more settled regulatory regime for AI in the UK, don't. You'll likely be waiting longer than you think. And you might miss the proverbial boat.

But don't rush either: a typical meme on LinkedIn, with pictures of CEOs, COOs, CTOs and other members of the C-suite wielding placards, goes as follows: "What do we want?" "AI!" "Why do we want it?" "We don't know!" "When do we want it?" "Now!" For this group, there's no strategy beyond FOMO (fear of missing out).

The key for businesses is to find the balance between not progressing quickly enough versus progressing too quickly. Don't let perfection be the enemy of the good, but don't go 'all in' without even a second thought. The sweet spot is a measured and strategic approach.

Fostering trust

If the day had a word cloud, 'trust' would loom largest. Trust is the glue holding this together: without it, AI will simply gather dust as expensive shelfware.

The Future @ Work 2026 report, published by Lewis Silkin in January 2026, noted that cultural resistance (including fear of job losses, mistrust of AI outputs) remains a key obstacle to adoption.

Speakers throughout the day confirmed this dynamic. How much human oversight AI products require will vary case by case, but getting that balance right is essential for building confidence.

Leadership

Tim Gordon, Partner at Best Practice AI, reminded the audience that AI "will be at the heart of every business model" and it isn't just something that needs to be discussed at, say, a quarterly meeting. It's moving from a compliance issue "to a general management issue".

Leadership will have to ask hard questions, as Adani says, like "is AI behaving like it should behave?" It's everyone's responsibility in the business to keep a keen eye on AI, not just the IT team.

What comes next

It was a busy day, rich with insights. In the next article, we examine the emerging challenges of agentic AI and what regulators are watching most closely.

We would welcome your thoughts.

What concerns you most about AI?

What excites you?

Do get in touch with the team.